Reddit processes over 2 billion comments per year. And a meaningful percentage of those comments are fake.

So how does Reddit actually detect them?

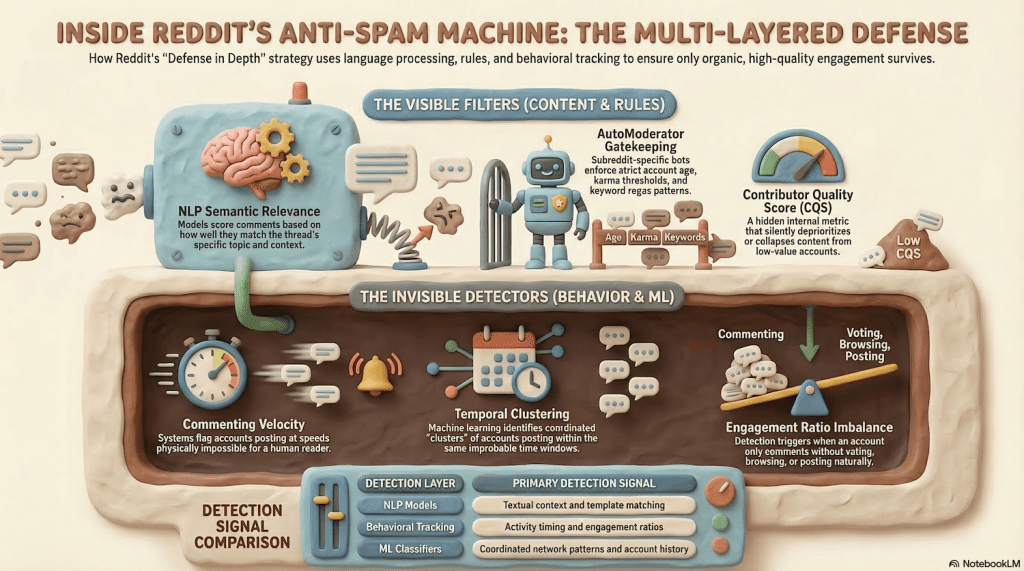

Not through some single magic filter. Reddit uses a layered detection system - NLP models, behavioral analysis, community moderation, and machine learning classifiers all working together.

Understanding how Reddit detects fake comments is the difference between running a campaign that works and watching your comments vanish into the void.

Here’s exactly how each layer operates.

Reddit’s NLP Comment Analysis: The First Line of Defense

Reddit’s comment spam filter starts at the language level.

Natural Language Processing (NLP) models scan every comment for signals that separate genuine engagement from manufactured text. Modern NLP classifiers can identify synthetic or templated text with high accuracy when trained on platform-specific datasets.

Published research suggests accuracy rates in the 90%+ range for well-tuned models, though Reddit has not disclosed the specifics of its own classifiers.

Here’s what Reddit’s NLP reddit comment spam filter evaluates:

Contextual relevance scoring: The model compares your comment’s semantic content against the original post and existing thread. A comment about “great product, highly recommend” under a post asking about hiking trails in Colorado scores near zero for relevance. Genuine comments reference specific details from the post - names, numbers, context clues.

Sentiment pattern detection: Generic positive language triggers flags. Phrases like “this is amazing,” “totally agree,” or “great post” with no substantive addition get scored as low-quality. Reddit’s models specifically watch for promotional sentiment - language patterns common in astroturfing campaigns.

Template matching: If your comment shares structural similarity with known spam templates - same sentence patterns, same keyword density, same persuasion architecture - the NLP model catches it. Researchers have documented how platforms use n-gram analysis and TF-IDF scoring to flag templated content across large datasets.

The key insight: Reddit’s NLP analysis isn’t just checking individual comments. It’s comparing your comment against millions of previously-flagged spam comments to find statistical similarities.

AutoModerator: The Subreddit Gatekeeper

Before Reddit’s sitewide systems even see your comment, AutoModerator might kill it.

AutoModerator is a rule-based bot that moderators configure per subreddit. According to Reddit’s Moderator Help documentation, AutoModerator can enforce rules on comments based on dozens of parameters.

The most common comment filters include:

- Account age minimums. Many subreddits require accounts to be 7-30 days old before commenting. Some high-value subreddits like r/science require 90+ days.

- Karma thresholds. Minimum comment karma requirements range from 10 to 500+ depending on the subreddit.

- Keyword filters. Regex patterns catch promotional language, specific URLs, brand mentions, and known spam phrases.

- Domain blacklists. Any comment containing a URL from a blacklisted domain gets auto-removed.

Here’s what makes AutoModerator particularly effective against bulk comment campaigns: each subreddit has different rules. A comment that passes in r/technology might get instantly removed in r/cryptocurrency. There’s no universal template that works everywhere.

Moderators share AutoModerator configurations through communities like r/AutoModerator and r/ModSupport. When one moderator identifies a new spam pattern, that regex pattern can spread to hundreds of subreddits within days.

Behavioral Signals Reddit Tracks on Comments

Language analysis catches bad comments. Behavioral analysis catches bad commenters.

Reddit monitors activity patterns that no amount of well-written text can disguise:

Commenting velocity: Dropping five comments across different subreddits within 60 seconds of each post going live is physically impossible for a human reading and responding thoughtfully. Reddit’s systems flag accounts that comment faster than a human reasonably could.

Comment length uniformity: Real users write comments of wildly varying lengths - some one-liners, some multi-paragraph responses. If all your comments fall within a narrow character range (say, 150-200 characters consistently), that statistical uniformity signals automation.

Engagement ratio imbalances: Reddit tracks the ratio between commenting, voting, browsing, and posting. An account that comments 50 times per day but never upvotes, never browses, and never posts has an engagement fingerprint that screams “bot.”

Understanding how comments and upvotes work together as engagement signals helps explain why balanced activity matters so much to detection systems.

Research from social media observatories, including Indiana University’s Observatory on Social Media, has shown that engagement ratio analysis is one of the most reliable bot detection methods across all social platforms.

Time-of-day patterns: Humans sleep. Humans have jobs. An account commenting uniformly across all 24 hours of the day, seven days a week, fails basic human behavior modeling.

Thread depth avoidance: Real users engage in back-and-forth conversations - replies to replies to replies. Fake comment campaigns almost always target top-level comments only, avoiding threaded discussions. This creates a measurable pattern: high top-level comment volume with zero nested engagement.

Machine Learning Classifiers: The Backend System

Behind the visible tools, Reddit runs ML classifiers trained specifically on known spam comment datasets.

According to Reddit’s transparency and policy documentation, the platform removes tens of millions of pieces of content through automated detection systems each year. A significant portion of those are comments flagged by machine learning before any human ever sees them.

These classifiers operate on a different level than NLP text analysis. They evaluate account-level patterns across the comment history:

Cross-thread pattern detection: If 15 accounts all comment on the same post within a 30-minute window, and those accounts share creation date ranges, karma profiles, or activity patterns, the classifier flags the entire cluster.

This is how Reddit catches coordinated astroturfing campaigns.

Network graph analysis: Reddit maps relationships between accounts - who replies to whom, who upvotes whose comments, who appears in the same threads repeatedly.

Inauthentic networks create detectable graph structures that differ from organic user interaction patterns.

Temporal clustering: The ML system watches for comments that arrive in statistically improbable clusters - too many comments, too fast, too coordinated. Even when individual comments look natural, the timing pattern across a campaign reveals artificial coordination.

CQS: The Silent Comment Killer

Reddit’s Contributor Quality Score (CQS) might be the most important factor most people don’t know about.

CQS is an internal score Reddit assigns to every account. It measures how much value your contributions add to the platform. Reddit’s help documentation on CQS confirms that low-CQS accounts have their content silently deprioritized.

For comments specifically, a low CQS means:

- Your comments may not appear in “Best” sort order even if they receive upvotes

- Your comments might be collapsed by default

- In some subreddits, your comments get silently filtered without any removal notification

The dangerous part: You won’t know it’s happening. There’s no “your comment was filtered” notification. Your comment appears normal from your perspective while being invisible to everyone else.

CQS is calculated from your entire account history - comment quality, removal rate, report frequency, and community feedback patterns. Accounts used primarily for promotional commenting develop low CQS scores rapidly.

This is why choosing a trusted comment service matters enormously. Services that use fresh accounts with no organic history guarantee low CQS and invisible comments.

What Makes Comments Genuinely Undetectable? Quality.

Reddit’s detection systems are sophisticated. But they all share a common design principle: they target patterns that deviate from genuine, valuable contributions.

The comments that pass every layer of detection do so because they meet the standard of real engagement.

Contextual depth from established accounts:

An account with 2+ years of organic history, diverse subreddit participation, and a healthy CQS score posting a genuinely relevant comment that references specific details from the original post - that’s indistinguishable from authentic engagement.

Because functionally, it is authentic engagement.

Natural timing that reflects real behavior:

Comments delivered over hours rather than minutes, with natural intervals, reflect how real users actually browse and respond.

This isn’t a trick - it’s how thoughtful engagement actually works.

Comments that genuinely add value:

The core principle behind every detection system: low-quality, generic content gets flagged. Substantive comments that add something to the discussion - a new perspective, a relevant data point, a specific experience - clear every filter because they’re doing exactly what Reddit wants comments to do.

Comments that survive moderation also tend to drive lasting SEO value because search engines reward the same quality signals Reddit does.

Distinct voices and varied perspectives

When each comment reads like it was written by a different person with a unique viewpoint, NLP template detection has nothing to flag.

This is the difference between mass-produced spam and genuine human contributions.

Reddit’s detection is tuned for volume-based, low-quality spam campaigns. The best services understand this - they build their entire model around quality, context, and authentic engagement patterns rather than trying to game detection systems.

A strategic approach to Reddit comments that prioritizes substance over volume produces comments that pass detection because they deserve to.

Why Quality Comments Don’t Trigger Detection

Every detection system Reddit uses - NLP analysis, AutoModerator rules, behavioral tracking, ML classifiers, CQS scoring - works by identifying patterns that deviate from organic behavior.

The comments that get caught share common traits: generic language, suspicious timing, uniform structure, accounts with no organic history.

The comments that don’t get caught share different traits: contextual relevance, natural timing, substantive value, accounts with established credibility.

Understanding reddit fake comment detection isn’t about finding exploits in the system. It’s about understanding what “organic” actually looks like at a technical level - and ensuring every comment meets that standard.

For businesses investing in Reddit comment campaigns, this technical knowledge is non-negotiable. The difference between comments that drive results and comments that disappear silently comes down to whether your approach respects or ignores these detection layers.

If you’re evaluating comment services, the detection framework above is your checklist. Any service that can’t explain how they handle NLP scoring, CQS management, behavioral patterns, and subreddit-specific AutoModerator rules isn’t worth the risk. Buy Reddit Comments from quality providers who understand Reddit’s comment ecosystem amd build their entire delivery model around these exact detection vectors.