Reddit banned over 61 million pieces of content from bot-driven accounts in Q3 2023 alone. That number has only climbed since.

If you operate multiple Reddit accounts for marketing, research, or community building, understanding how Reddit bot detection actually works is not optional. It is survival.

Reddit does not rely on a single filter. It runs a layered detection stack - four different systems that each catch what the others miss. Most people only know about one or two. Here is the full picture.

Layer 1: Network and Device Fingerprinting

Before you type a single character, Reddit already knows more about you than you think.

Every request to Reddit’s servers carries a fingerprint. This fingerprint includes your IP address, browser headers, screen resolution, installed fonts, WebGL renderer, timezone, and dozens of other signals that form a unique device profile.

Reddit’s anti-abuse system - internally part of their “Safety” infrastructure - cross-references this fingerprint against every other account that has ever connected from the same environment.

Run two accounts from the same browser on the same IP? Reddit sees them as linked within seconds.

According to the Electronic Frontier Foundation’s Panopticlick research, 83.6% of browsers carry a unique fingerprint. That means even switching accounts in the same browser - without changing any underlying parameters - leaves a trail that is functionally identical to signing your name.

What triggers Layer 1 flags:

- Multiple accounts sharing the same IP address or IP subnet

- Identical or near-identical browser fingerprints across accounts

- Rapid account switching from the same device

- VPN or proxy IPs that appear on known datacenter blocklists

- Sudden geographic jumps that defy physical travel time (logging in from Tokyo, then New York, 10 minutes later)

This is why proper account management tools matter. Residential proxies, anti-detect browsers, and separated browser profiles exist specifically to give each account a distinct, realistic fingerprint.

Reddit has been refining these signals since at least 2018, when they partnered with cybersecurity firms to combat election-related manipulation. The system is not perfect - but it catches the low-effort operators immediately.

Layer 2: How Reddit Bot Detection Analyzes Behavior

Fingerprinting catches shared infrastructure. Behavioral analysis catches shared patterns.

Reddit’s machine learning models - built on years of labeled data from banned reddit bots - track how accounts interact with the platform over time. These models build a behavioral profile for every account and flag statistical anomalies.

Research from Stanford’s Internet Observatory has documented how platforms like Reddit use temporal analysis and interaction graphs to identify coordinated inauthentic behavior.

The core idea: real humans are messy and unpredictable. Bots are not.

Behavioral signals Reddit tracks:

- Posting tempo: Humans post in bursts with irregular gaps. Bots post at metronomically consistent intervals. An account that posts every 14 minutes, 24 hours a day, is not a person.

- Vote timing: When five accounts all upvote the same post within 90 seconds of publication, that is a coordination signal. Reddit’s vote manipulation detection specifically watches for temporal clustering around specific content.

- Content similarity: Accounts that post the same URL across multiple subreddits, or that use suspiciously similar phrasing, get flagged. Reddit uses text similarity scoring - likely TF-IDF or embeddings-based - to catch rephrased duplicates.

- Session behavior: How long between login and first action? How deep into comment threads does the account scroll? Does the account ever just browse without posting? Reddit bots tend to skip the browsing entirely and jump straight to action.

- Subreddit diversity: An account that only ever interacts with three subreddits - and those three subreddits happen to be promotional targets - looks very different from an organic user who wanders across dozens of communities.

A 2024 study published by researchers at Binghamton University found that behavioral analysis alone could identify reddit bot accounts with 96% accuracy when models were trained on posting rhythm, comment depth, and subreddit diversity metrics.

This is exactly why warming up accounts properly is critical.

An account that suddenly shifts from zero activity to aggressive posting triggers behavioral anomaly detection. Gradual, varied activity that mimics organic usage patterns flies under the radar.

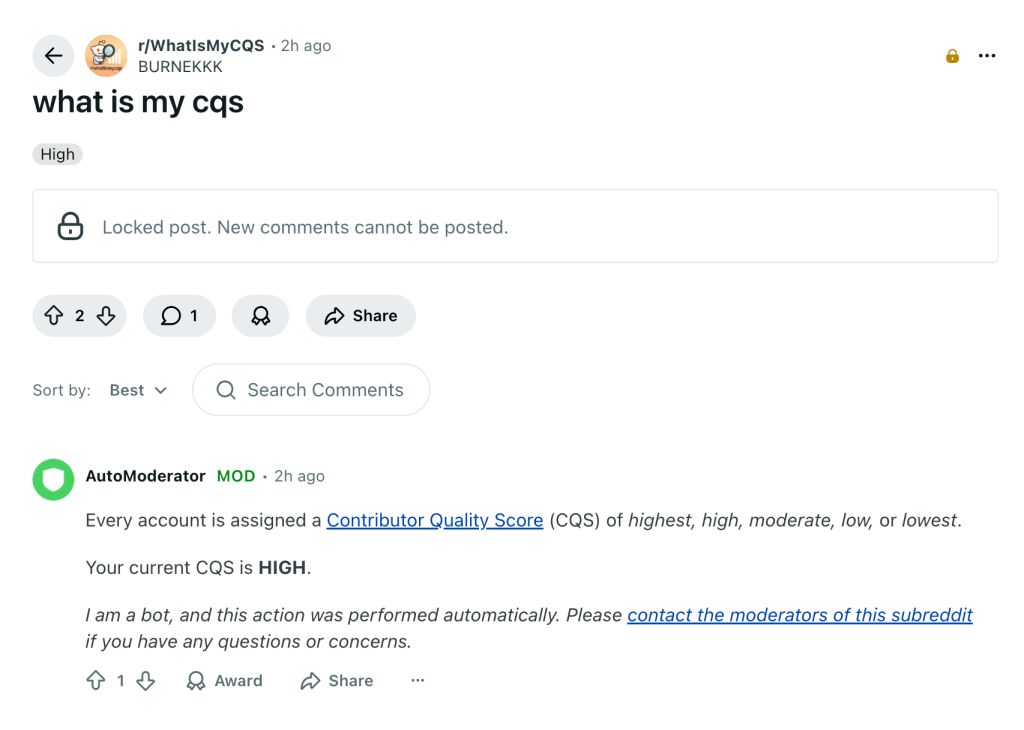

Layer 3: Contributor Quality Score (CQS)

CQS is Reddit’s internal reputation metric, and most people drastically underestimate its importance.

Unlike karma - which is public and easily farmed - CQS is a hidden score that Reddit calculates based on the quality of an account’s contributions.

Reddit introduced CQS as part of their push to weight “good faith participation” over raw engagement volume.

According to Reddit’s own documentation on content quality, CQS factors include:

- Ratio of removed vs. approved content

- How often an account’s posts and comments get reported

- Whether moderators consistently approve or remove the account’s contributions

- Community feedback signals (upvote-to-downvote ratio in context)

- Account age and consistency of participation

A low CQS does not immediately ban an account. Instead, it quietly degrades visibility. Posts from low-CQS accounts get pushed into spam filters more aggressively. Comments may be silently collapsed. The account still functions - it just stops reaching anyone.

This is shadow suppression, not a shadowban in the traditional sense. The account owner often does not realize anything has changed.

For anyone running multiple accounts, CQS is the metric that separates usable accounts from dead weight.

An aged account with high karma but terrible CQS - because it spent months posting low-quality promotional content - performs worse than a newer account with genuine engagement history.

You can learn more about how Reddit verifies and scores accounts to understand what makes a high-CQS profile.

Layer 4: Human Moderator Review

Automated systems handle scale. Humans handle nuance.

Reddit’s 100,000+ volunteer moderators are the final detection layer. They see things algorithms miss: context-inappropriate comments, subtly off-topic self-promotion, and the “uncanny valley” of accounts that technically follow every rule but clearly are not genuine participants.

Moderators have access to tools like:

- Mod log history showing an account’s full interaction record within a subreddit

- Toolbox browser extension providing cross-subreddit ban data

- BotDefense and similar bot-list services that maintain shared databases of known reddit bot accounts

- Reddit’s native mod queue which surfaces AI-flagged content for human review

A 2023 survey by the Pew Research Center found that Reddit’s volunteer moderation structure is one of the platform’s defining features - and one of the hardest to game, precisely because human judgment adapts faster than bot operators can iterate.

When a moderator bans an account, that signal feeds back into Reddit’s automated systems. The fingerprint, behavioral patterns, and CQS profile of the banned account become training data for the next generation of detection models. It is a feedback loop that constantly tightens.

Subreddits with strict posting requirements - minimum karma thresholds, account age gates, manual approval queues - add yet another filter layer that purely automated accounts struggle to clear.

What Reddit Bot and Fake Account Detection Still Misses

No system is perfect. Reddit’s detection stack has real blind spots.

1. Aged, organically-built accounts pass every automated check

If an account has years of genuine activity, diverse subreddit participation, and strong CQS, the automated layers have no reason to flag it. The fingerprinting layer only catches accounts that share infrastructure.

A well-maintained account on clean residential infrastructure looks identical to any other real user.

2. Slow-drip promotion is nearly invisible.

An account that posts valuable content 95% of the time and drops a promotional link 5% of the time generates positive behavioral signals.

The machine learning models trained on obvious spam patterns do not flag accounts that look like enthusiastic community members who occasionally mention a product they genuinely use.

3. Small-scale operations avoid coordination triggers

Reddit’s vote manipulation detection is calibrated for bot networks running dozens or hundreds of accounts in concert.

Two or three accounts operated carefully, with staggered timing and independent activity patterns, stay below the statistical threshold.

4. Novel behavior patterns have no training data

Machine learning models are backward-looking. They catch patterns they have seen before.

Users who develop genuinely new approaches have a window before the models adapt.

The gap between detection and evasion is not about exploiting bugs. It is about understanding that Reddit’s systems are optimized for catching high-volume, low-sophistication operations - because those cause the most visible damage to the platform.

For a deeper look at whether buying accounts introduces detection risk, the key variable is always account quality, not the act of acquisition itself.

How to Stay Ahead of Every Detection Layer

Understanding detection is only useful if it changes how you operate.

- Layer 1 defense: Isolate every account: Separate browser profiles with distinct fingerprints. Residential proxies - never datacenter IPs. Unique device parameters per account. Never let two accounts share any infrastructure overlap.

- Layer 2 defense: Behave like a human: Vary posting times. Browse without acting. Engage with content outside your target subreddits. Break predictable patterns. If you catch yourself doing the same thing at the same time every day, the algorithm will catch you too.

- Layer 3 defense: Protect CQS relentlessly: Post genuinely useful content. Avoid subreddits with aggressive automod filters until your account has established history. Never spam. A high CQS is the single most valuable asset an account can have - harder to build than karma and impossible to fake.

- Layer 4 defense: Earn moderator trust: Participate authentically in communities before ever posting anything promotional. Answer questions. Add value. Moderators remember accounts that contribute - and they remember accounts that do not.

The users who get caught are almost always the ones who treat Reddit like a broadcast channel rather than a community.

The ones who succeed long-term are the ones who understand that every detection layer is ultimately measuring the same thing: does this account act like a real person who genuinely participates?

If you are building a multi-account strategy, the foundation is accounts that already have the history, karma, and CQS to pass every layer. The detection stack is sophisticated - but it is designed to catch fakes. Accounts with real history are not fakes. They are transfers.

The key difference lies in understanding this distinction, which can determine whether you get caught or achieve results.